This article appeared in the proceedings of SAFECOMP'99. Coyright belongs with Springer Verlag Lecture Notes in Computer Science who have kindly agreed to allow publication on this web site.

Evaluating the Contribution of DesktopVR for Safety-Critical Applications

Chris Johnson

Department of Computing Science, University of Glasgow, Glasgow, Scotland.

Email: johnson@dcs.gla.ac.uk, http://www.dcs.gla.ac.uk/~johnson

Desktop virtual reality (desktopVR) provides a range of benefits for training and visualisation tasks in safety-critical environments. Users can exploit conventional keyboards and mice to manipulate photo-realistic images of real-world objects in three dimensions using QuicktimeVR. Other approaches, such as the Virtual Reality Mark-up Language (VRML), enable users to navigate through three dimension models of virtual environments. Designers can exploit these techniques in training tools. They provide users with an impression of environments that are either too dangerous or too expensive to allow direct interaction during familiarisation exercises. DesktopVR also supports the visualisation of safety-critical information. For example, it can be used to provide engineers with an overview of the increasingly large and complex data sets that are being gathered about previous accidents and incidents. However, it is also important to balance the appeal of these techniques against a longer-term requirement that they actually support the tasks for which they are being developed. This paper, therefore, describes the problems that arose when two design teams attempted to validate the claimed benefits of desktopVR as a training tool for a regional fire brigade and as a visualisation tool for accident statistics.

Keywords: DesktopVR; Training; Visualisation; Accident Reports; Human Computer Interaction.

1. Introduction

DesktopVR techniques are being introduced into an increasing range of safety-related applications (Johnson, 1998). However, it is difficult to determine whether these presentation techniques actually support users' needs (Kaur, Sutcliffe and Maiden, 1998). The following sections illustrate this argument by focussing on the problems that arose during the validation of desktopVR in two safety-critical applications. The first focuses on the use of QuicktimeVR within a training package for a regional fire brigade. The second case study concentrates on the use of VRML to support the visualisation of events leading to major accidents. These are appropriate examples for this paper because they illustrate two radically different applications of desktopVR to support safety. In the former case, three-dimensional presentation techniques are being used to provide fire fighters with practical skills in the operation of specialist rescue equipment. In the later case, desktopVR techniques provide a more abstract overview of systems failure and human error during major accidents. In spite of such differences, it is possible to identify a number of common problems that arose during the validation and testing of these interfaces:

· it is hard to identify benchmarks that can be used to assess the usability of desktopVR in safety-critical systems;

· it can be difficult to identify the specific user groups and tasks that are to be supported by desktopVR in safety-critical systems;

· it is difficult to measure the contribution of desktopVR because it, typically, only forms part of a larger interface design within a complex safety-critical system.

These issues are not unique to desktopVR interfaces. Summative evaluation and benchmarking complicate the design of many other interactive systems. However, the consequences of failing to support user tasks make these problems particularly severe for safety-critical interface design. The risks of failure are also compounded by the lack of design guidance that developers can call upon during the application of desktop virtual reality.

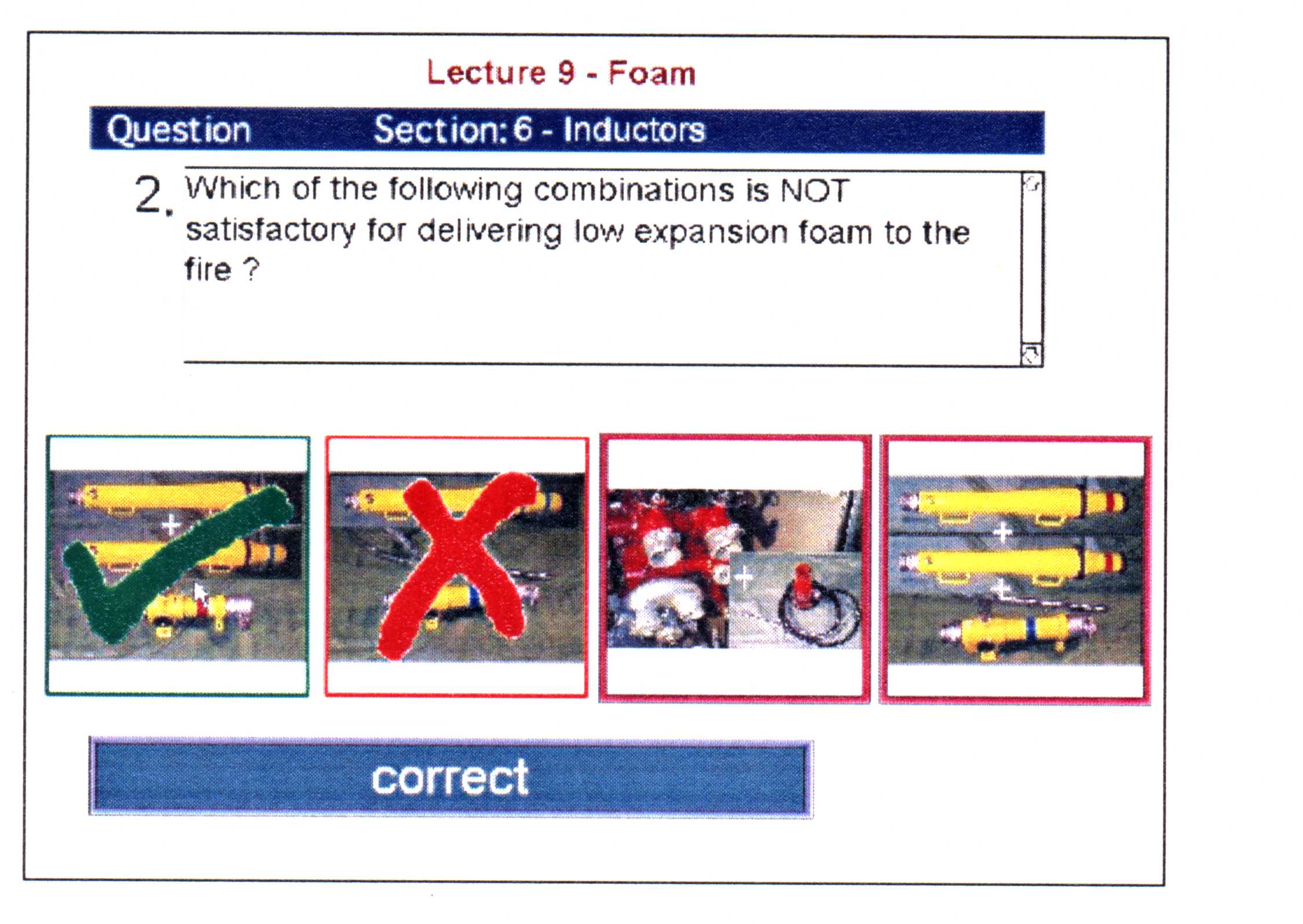

2. The Fire Brigade Case Study

The training of Fire Officers is intended to provide both practical and theoretical skills. For example, they must learn how to operate breathing apparatus during fires. They are also expected to have specialised technical knowledge. Officers must know how to apply the latest foam technology to combat a range of different fires. Computer Aided Learning (CAL) tools are perceived by many in the Fire Brigade as a cost-effective means of delivering technical knowledge and practical skills. They are particularly appropriate for an organisation whose members are scattered amongst many different stations.

Fire fighters are often characterised by activist learning styles. It has been argued that they learn more effectively through direct experience than through the mediation of books or lectures (Johnson, 1998a). DesktopVR techniques, therefore, provide important benefits for the development of CAL in the fire brigade. Fire fighters can learn by interacting with objects in virtual environments rather than by passively listening to conventional lectures. Figure 1 shows how desktopVR techniques were applied to a Heavy Rescue Vehicle (HRV) training package. These vehicles contain specialist lifting and cutting equipment that may be necessary to extricate people from major road traffic accidents. The photo-realistic facilities of QuicktimeVR provide a three-dimensional representation of the storage area inside the HRV. Individual items of equipment can be found by exploring the desktopVR view, shown in the middle panel of Figure 1, or by selecting an item from the list on the right.

Figure 1: The Heavy Rescue Vehicle Training Package

The HRV package also provided detailed information about the equipment on the vehicle. Hypertext was used to provide electronic access to existing technical notes. Video clips were used to show the equipment "in action". Figure 2 shows how QuicktimeVR also enabled fire fighters to manipulate individual items of equipment. An important point about these models is that they provide three-dimensional views of items of equipment that are too heavy or cumbersome for an individual to lift. They are also available at times when fire officers cannot have direct access to the HRV itself. In our case study, the HRV had to be available to respond to emergencies for almost 24 hours each day. This implied that individual fire officers had almost no time to familiarise themselves with its equipment before they were actually involved in an incident.

Figure 2: An Object Rotation of Lucas Cutters on the Heavy Rescue Vehicle

3. The Accident Reporting Case Study

The previous section described how desktopVR provides fire fighters with an initial introduction to the equipment stored on an HRV. In contrast, the second case study focuses on the application of desktopVR to support the visualisation of more abstract safety-critical information. Most accident investigation agencies now place their reports on the World Wide Web. This is a relatively cheap means of disseminating information to companies and regulators (Johnson, 1997). However, we recently conducted an international survey to assess the effectiveness of these web sites. The questionnaire can be accessed on:

http://www.dcs.gla.ac.uk/~snowdonp/quest.html

The results were disappointing (Snowdon and Johnson, 1999). Most readers found that web-based accident reports were poorly structured. Many found them harder to read than previous paper versions. Such reactions arise because most investigation authorities simply convert paper-based reports into html or pdf format. Few agencies exploit the novel presentation techniques that are supported by today’s communications networks. In contrast, Figure 3 shows how an imagemap can be integrated into the Sheen report on the sinking of the Herald of Free Enterprise. Users select the relevant sections of the image to view all sections of the report that relate to the car deck, the bridge and so on. It is important to stress that these techniques are not intended to replace prose descriptions of major accidents. They do, however, provide relatively low cost means of augmenting the information in a manner that actually supports the readers’ comprehension of the events leading to human ‘error’ and systems ‘failure’. Figure 4 illustrates how desktopVR techniques can be used to extend such visualisations into three dimensions. These images show the layout of a cockpit using QuicktimeVR. Hypertext links again provide means of connecting objects in these environments with more detailed textual descriptions. This helps readers to gain a much more direct impression of the physical context in which particular events occur. Current work with the UK Health and Safety Laboratory is extending this approach from accident reports to more general litigation. Juries can be shown three-dimensional models of an accident scene to help them follow the legal arguments that are presented during court cases.

Figure 3: Integrating Imagmaps into Accident Reports

Figure 4: QuicktimeVR model for Aircraft Evacuation/Cockpit Familiarisation/Seat Booking

The approach, illustrated in Figure 4, helps users to view location dependent information. It is less good as a means of viewing the course of human error and systems failure over time. Figure 5 illustrates a three-dimensional timeline that avoids this limitation. It contains markers that indicate the actions and events that occurred at particular moments during an accident. The user can walk along the line in three dimensions to view events from the start of the accident looking forward into the future. They can also look at the events from any other perspective to gain a better view of the things that happened immediately before and after that moment in time. Flags are used to represent the events leading to the accident. If a flag is planted into the line then an exact timing is available. A flag that has not been planted indicates that the exact time is not known.

Figure 5: Using VRML to Visualise Events in Major Accidents

4. The Problems of Validating DesktopVR

The previous section briefly how desktopVR can be used to support two very different safety-critical applications. This section goes on to describe the problems that arose when we tried to assess the usability and the utility of these systems.

4.1 Problem 1: Establishing Benchmark Criteria for DesktopVR

The first problem in evaluating any system is to determine the criteria that can be used to assess "usability" (Kalawsky, 1998). This immediately raised problems for the first case study because we were developing an entirely new system. At the start of the project, the fire fighters did not have access to any CAL applications. None of them had used or even heard about desktopVR. This created considerable problems because we had no means of establishing the "benchmark" criteria against which to evaluate the new interfaces. The best that we could do was to issue a questionnaire to assess the fire fighters' attitudes about existing training techniques. Figures 6 and 7 present the results for 27 fire fighters from two different stations within the same region. These results guided the development of our desktopVR system. In particular, we strove hard to avoid the negative reaction towards passive lectures, shown in figure 7.

Figure 6: Perceived "Ease of Learning" in the Fire Brigade Case Study

Figure 7: Perceived "Effectiveness of Learning" in the Fire Brigade Case Study

Our problems began when we attempted to show that the new desktopVR system was better than the previous approaches. We were particularly concerned to show an improvement over conventional lectures and videos; the CAL system was not intended to reduce the amount of drill work or real incidents that the fire fighters were exposed to. Initially, we decided that we would re-issue the questionnaire. This would enable us to assess the fire fighter’s attitudes towards lectures, videos, drills, incidents AND the new CAL tools. However, this raised a number of objections:

the Hawthorne effect.

The fact that this project had support from the highest levels of the fire brigade made it difficult for us to judge whether we were receiving unbiased responses to questions about the utility of desktopVR within a training tool. The term "Hawthorne effect" refers to a 1939 study of car workers whose performance was improved simply by monitoring their activities; they produced more components simply because they knew that they were being watched.

the problems of measuring embedded effects.

It was less important for the fire brigade to show that our novel presentation techniques were effective than it was to ensure that the overall application satisfied their training objectives. The scores from a comprehension test were less important than the fire fighters’ performance during real incidents. This created a number of practical problems. It can be extremely difficult to identify objective criteria for real-world performance in safety-critical tasks. For instance, the time that it takes to extract a casualty is affected by many different contextual factors ranging from the make and condition of their vehicle through to the medical condition of that casualty. Of course, more qualitative criteria can be applied but this raises the problem of validating and recording those assessments in a manner that supports effective, long-term comparisons between subjects.

the problems of measuring longitudinal effects.

The average length of service amongst the users of our system was fifteen years. Any subjective assessment after a few months access to the new technology would provide a poor impression of the long-term effectiveness of desktopVR. Such validation exercises can be complicated by the fire fighters exposure to real-world incidents. During the validation of our system, one group was asked to use some of the theoretical material during a real incident. In balanced experimental techniques, half of this group should have been exposed to the CAL tool and half should have been exposed to conventional lectures. This would then have enabled us to make valid comparisons between these two different training techniques. It was impossible to achieve such a balance. In a longitudinal study, these problems raise further ethical problems because they imply that some fire fighters should be deprived of a training resource over a prolonged period to measure the effect of the absense of that resource on their performance.